Playing with Siri Intents

25 Oct 2018I’ve been enjoying playing with the new Siri Intents in iOS 12, and obviously didn’t need much of an excuse to get my Yeltzland app on yet another platform!

Shortcuts from NSUserActivity

It was pretty easy to get some basic integrations with Siri Shortcuts working. I was already using NSUserActivity on each view on the app to support Handoff between devices, so it was quite simple to extend that code for Siri shortcuts.

For example, on the fixture list page I can add the following:

// activity is the current NSUserActivity object

if #available(iOS 12.0, *) {

activity.isEligibleForPrediction = true

activity.suggestedInvocationPhrase = "Fixture List"

activity.persistentIdentifier = String(format: "%@.com.bravelocation.yeltzland.fixtures", Bundle.main.bundleIdentifier!)

}

Making the activity eligible for Prediction means it can be used as a Siri Shortcut, and obviously the suggested invocation phrase is a hint for when you open the shortcut in Settings to be able to open the app directly on the Fixture List view from Siri.

Building a full custom Siri Intent

Probably the most useful app feature to expose via a full Siri Intent is the latest Halesowen score. By that I mean an intent that will speak the latest score, as well as showing a custom UI to nicely format the information.

There are plenty of good guides on how to build a custom Siri Intent out there, so I won’t add much detail on how I did this here.

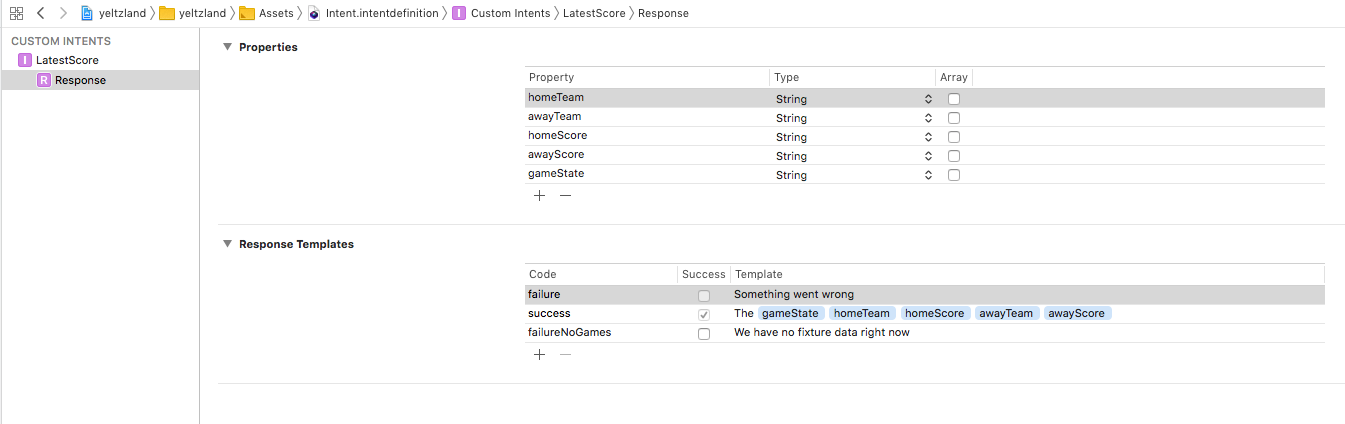

However one strange issue I couldn’t find a work around for was that, when trying to put a number as a custom property in the Siri response, I couldn’t get the response to be spoken.

As you can see from the setup below, I got around this by passing the game score as a string rather than a number, but I wasted a long time trying to debug that issue. Still no idea why it doesn’t work as expected.

Building a custom UI to show alongside the spoken text was also pretty easy. I’m quite happy with the results - you can see it all working in the video below

To make the shortcut discoverable, I added a “Add to Siri” button on the Latest Score view itself. This is really easy to hookup to the intent by simply passing it in the click handler of the button like this:

if let shortcut = INShortcut(intent: intent) {

let viewController = INUIAddVoiceShortcutViewController(shortcut: shortcut)

viewController.modalPresentationStyle = .formSheet

viewController.delegate = self

self.present(viewController, animated: true, completion: nil)

}

I’m sure you’ll agree the view itself looks pretty classy 🙂

Summary

It was a lot of fun hooking everything up to Siri, and I’m really pleased with how it all turned out.

Overall I think opening up Siri to 3rd party apps could be game-changing for the platform. Previously Siri was so far behind both Google and Amazon it was almost unusable except for the most basic of tasks. However, now it can start working with those apps you use all the time, I can see it being a truly useful assistant.

Siri is still a way behind of course, but once custom parameterised queries are introduced - presumably in iOS 13 - and if the speech recognition can be improved, it is definitely going to be a contender in the voice assistant market.

I’m also looking forward to Google releasing their similar in-app Actions at some point soon.

Exciting times ahead!